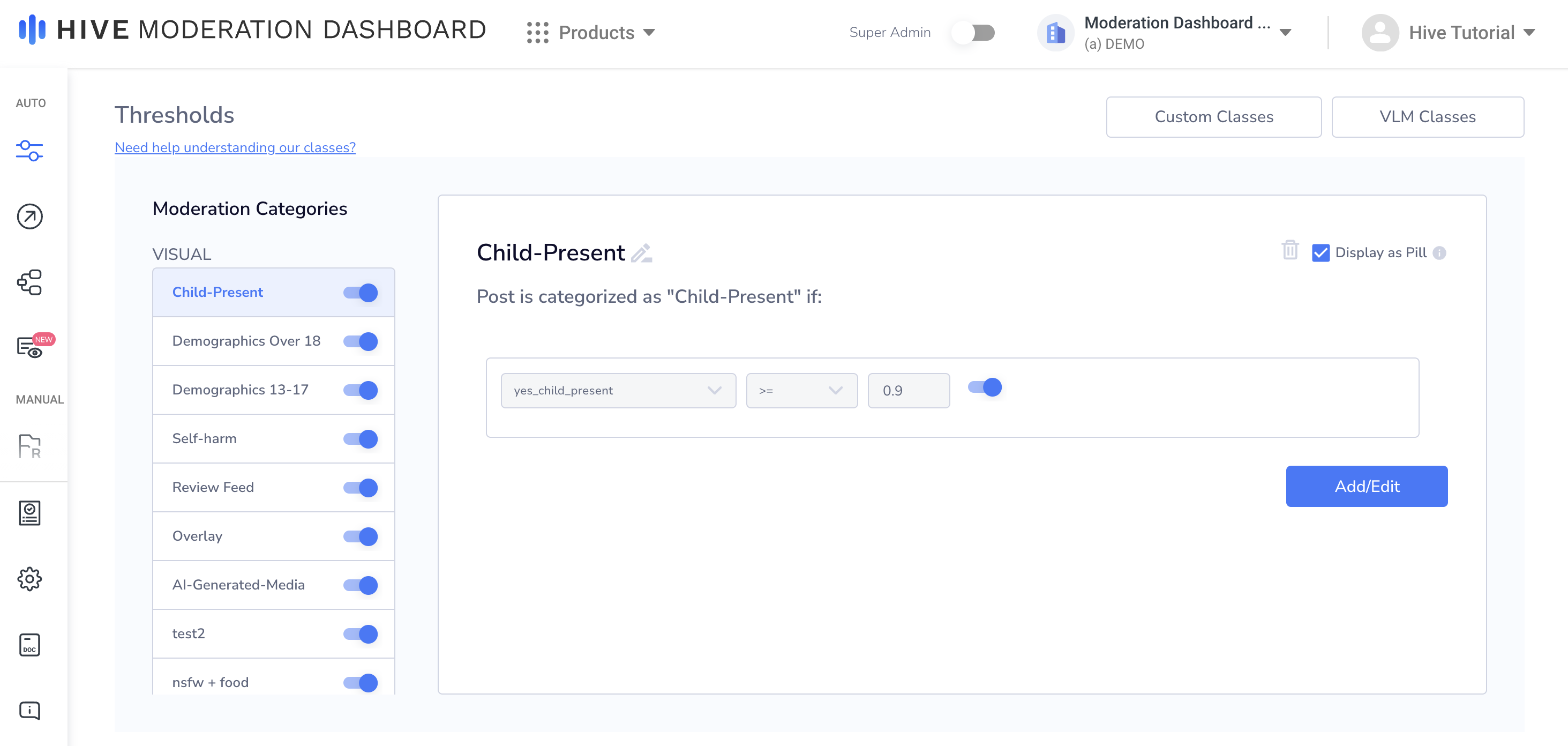

Thresholds

The Thresholds tab allows you to automatically categorize content based on the detection scores it receives from the models you’ve enabled. If a post’s detection scores meet the conditions you’ve defined for a particular moderation category, it will be classified as said category. You can use these category classifications when you define your moderation rules. These thresholds can be customized to fit your platform’s content policies and risk sensitivities.

How Thresholds Work

- When content is submitted, every model you’ve enabled in Moderation Dashboard will run detection.

- Each model returns confidence scores for its detection classes (e.g.,

a_little_bloody: 0.92). - Every active moderation category threshold will check if any confidence scores match their respective conditions.

- If a score is within a category’s threshold, the content will be classified as said category (e.g., if one of the conditions for a post to be classified as "Suggestive" is if

general_suggestive >= 0.9, then a post that scoresgeneral_suggestive: 0.95would be categorized as “Suggestive”.) - These category classifications can be used as actionable signals within your moderation rules' conditions (e.g., “(If a) Post triggers any of the following Moderation Categories…”).

Note: A threshold only triggers if you're running a model that detects its specified classes. For example, a threshold checking for very_bloody >= 0.8, which is a Visual Moderation class, will not trigger if you're only running the OCR model.

Managing Thresholds

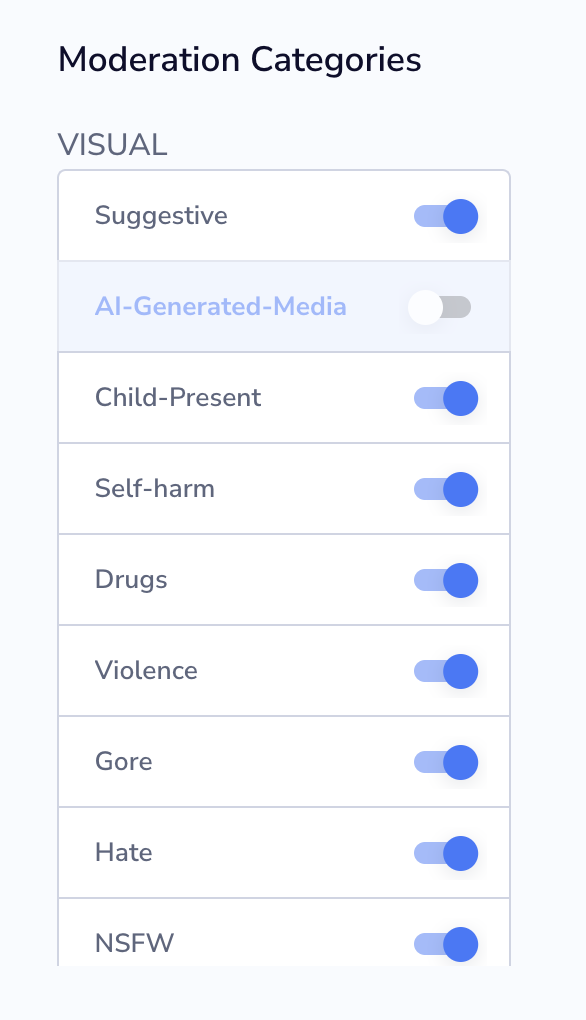

Enabling/Disabling Moderation Categories

Use the toggle switch next to each moderation category name to enable or disable it:

- Enabled: Threshold runs on all content and categorizes matches.

- Disabled: Threshold doesn't run; detection for these classes is skipped entirely.

Display as Pill

Check "Display as Pill" to show the moderation category label throughout Moderation Dashboard (e.g., in moderation queues, content rows, etc.). Uncheck to keep the category active, but hide it from the UI. This is useful for categories that feed into rules, but don't need visible labels.

Multiple Matching Thresholds

When a piece of content matches multiple thresholds, all matching categories are applied. There is no priority order or exclusivity. For example, content could be tagged as both "graphic_content" and "adult" if it meets both thresholds.

Building Conditions

Each threshold is built from one or more conditions that check detection scores or whether specific classes were detected.

Detection Classes

Each condition checks a detection class from your enabled models. The UI for setting thresholds varies by detection class. Some use sliders with visual levels (if a score is a whole number - 0, 1, 2, 3), while others use operator dropdowns with numeric input (if a score is a fractional number between 0 and 1).

Operators

- Comparison Operators (

<,>,<=,>=,=): Check if a detection score meets a threshold (e.g.,adult >= 0.8). - exists: Triggers if the class was detected at all, regardless of score.

- does not exist: Triggers if the class was not detected.

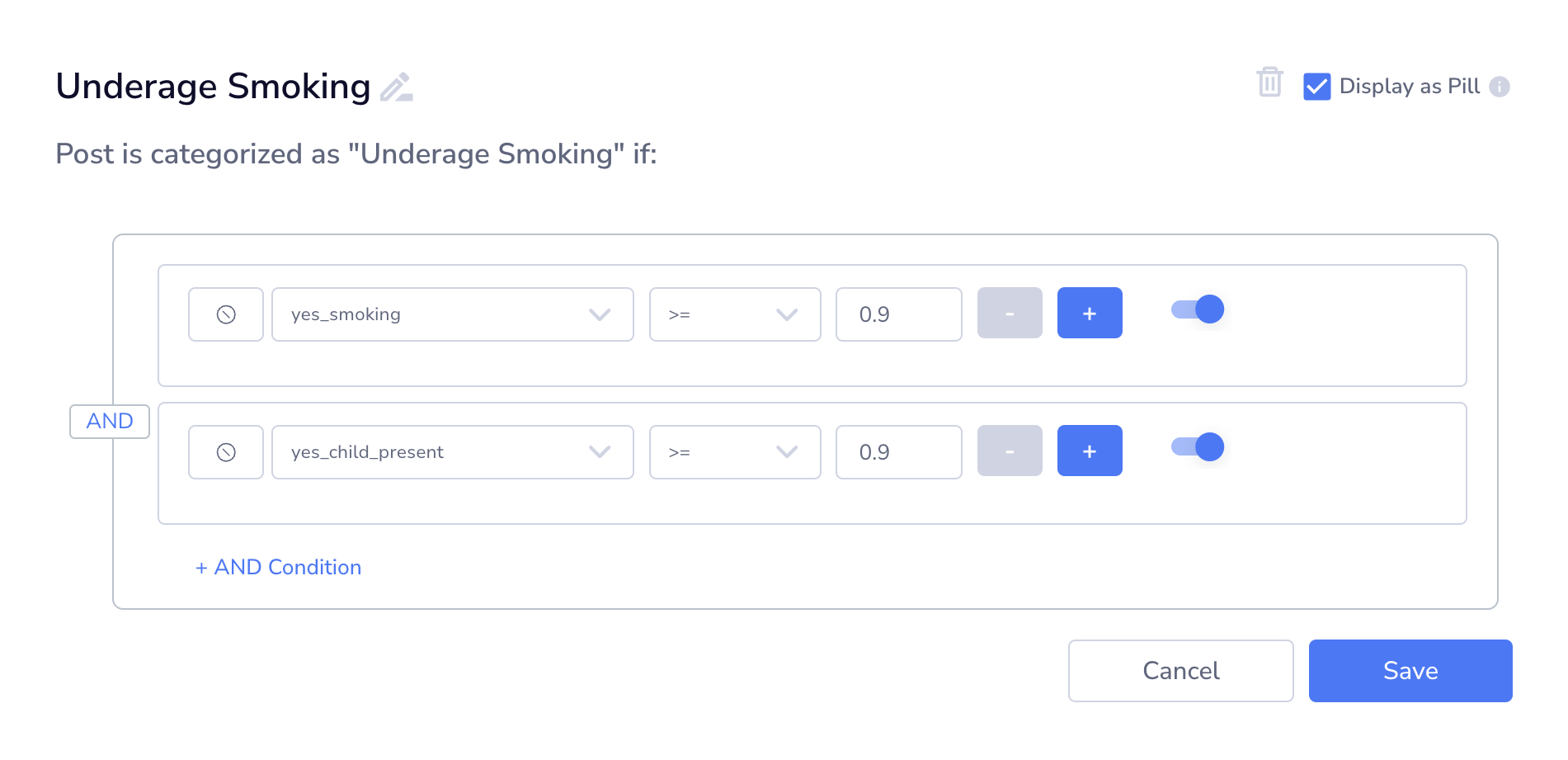

Combining Conditions with AND/OR

Conditions can be organized into groups, with each group having an operator (AND or OR):

- AND Groups: All conditions in the group must be true.

AND Groups

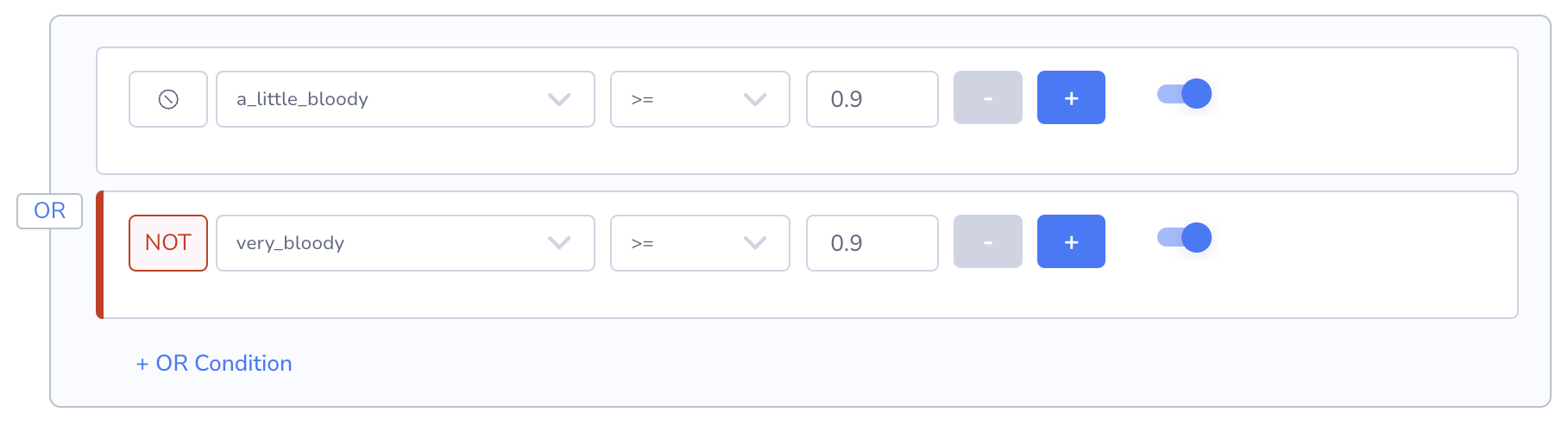

- OR Groups: At least one condition in the group must be true.

OR groups

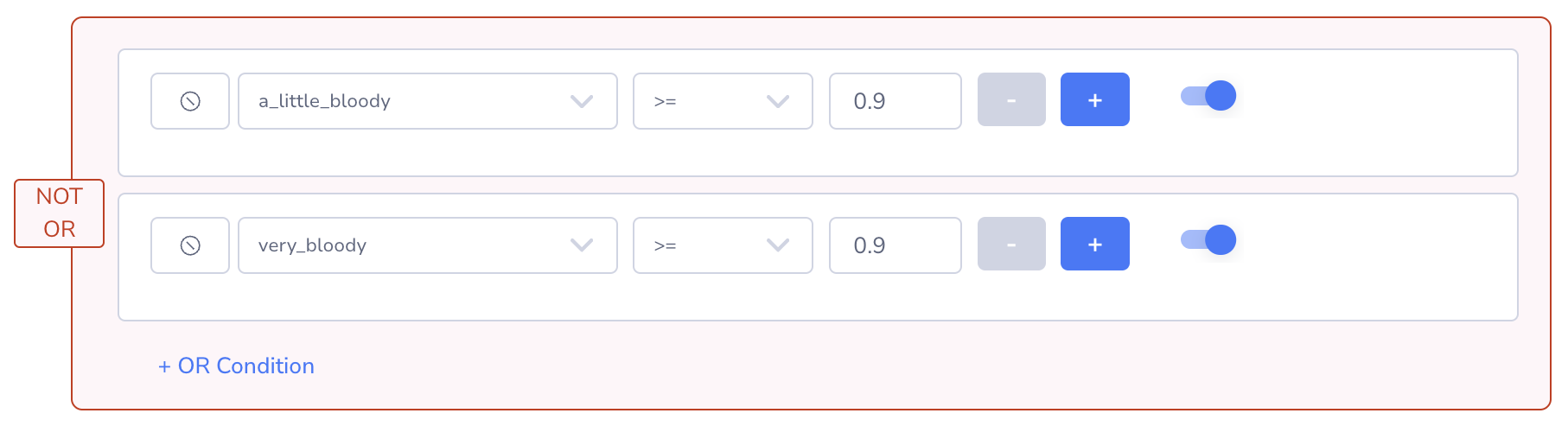

Negating Conditions and Groups

- NOT (Individual Conditions): Click the ⊗ icon to negate a single condition.

NOT (Individual Conditions)

- NOT (Groups): Toggle NOT on an entire group to negate all its conditions.

NOT (Groups)

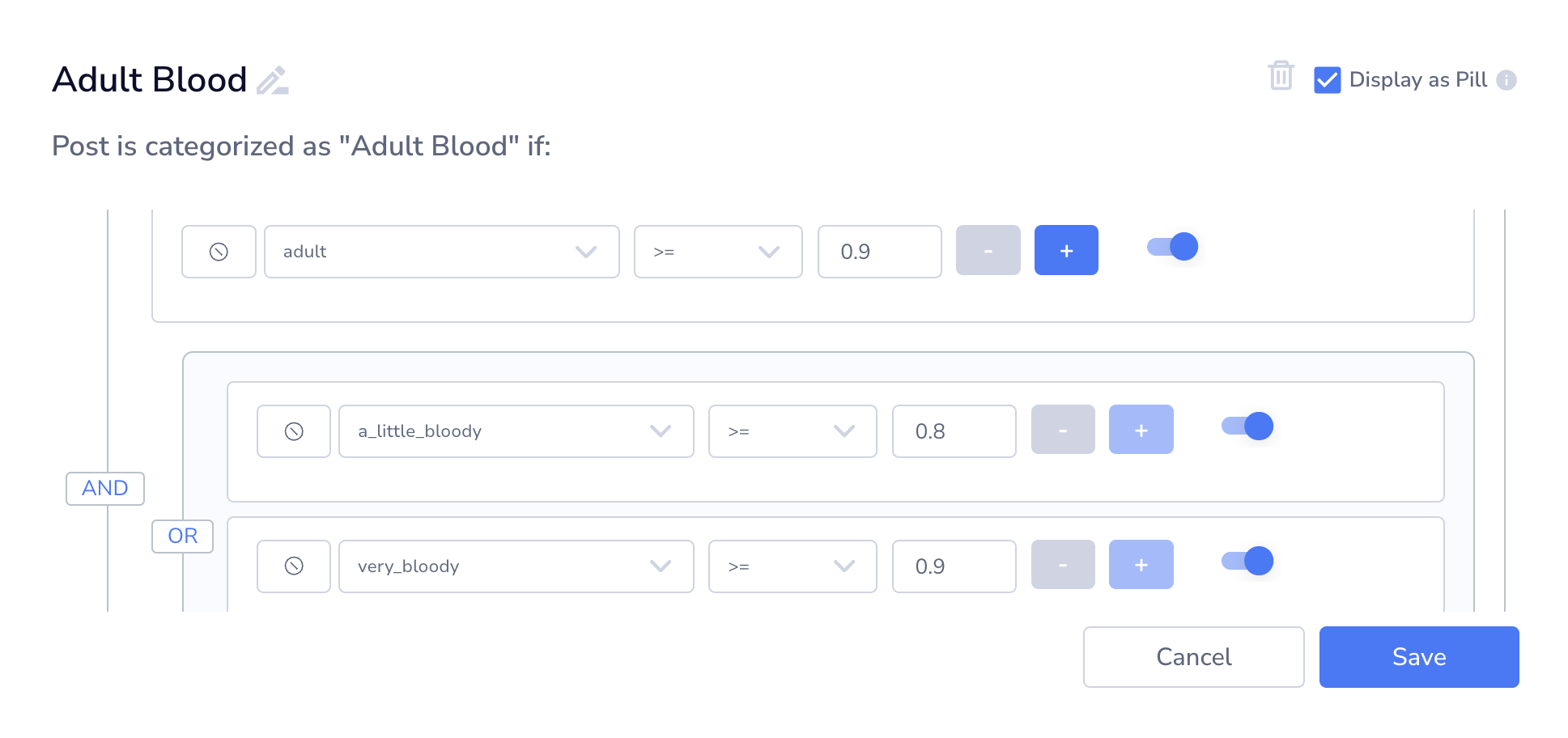

Nesting Groups

Groups can be nested inside other groups for complex logic, connected with AND/OR operators between them. This can be done by clicking the blue plus button at the right of an individual condition within the current group, and selecting the opposite operator when prompted to create a subgroup:

Nested groups

This evaluates as: (adult >= 0.9) AND (a_little_bloody >= 0.8 OR very_bloody >= 0.9).

TipPlan your condition logic before building. Switching between AND/OR operators after creating conditions requires rebuilding the group, so map out your logic first to avoid rework.

Custom Classes

See Adding Custom Classes to Pattern-Matching for details on creating and managing custom detection categories.

Note: Moderation Dashboard has its own UI so that you can sync these custom classes across many different projects.

Available Detection Classes

For a full list of available detection classes and what they detect, see our model pages listed under "Understand". For example, linked are overviews for Visual Moderation and Text Moderation. Their classes can be found as subpages.

Updated 3 months ago