CSAM Detection - Classifier API

Integrate with our CSAM Detection API for both images and videos

Deprecated EndpointSoon, we will no longer be offering separate endpoints for our Hash Matching and Classifier CSAM detection models. Moving forward, customers should use our combined endpoint.

Overview

Hive has partnered with Thorn to offer our proprietary CSAM detection API, which uses embeddings to detect novel child sexual abuse material (CSAM) content. This classifier differs from our other collaboration with Thorn—the CSAM matching API—which only covers known CSAM content. Both images and videos are accepted inputs.

The classifier works by first creating embeddings of the media. An embedding is a list of computer-generated scores between 0 and 1. After we create the embeddings, we permanently delete all of the original media. Then, we use the classifier to classify the content as CSAM or not based on the embeddings. This process ensures that we do not store any CSAM.

Response

For each class, the classifier returns a confidence score between 0 and 1, inclusive. The confidence scores for each class sum to 1.

To see an annotated example of an API response object for this model, you can visit our API Reference.

Supported File Types

Image Formats:

jpg

png

Video Formats:

mp4

mov

Integrating with the CSAM Detection API

Each Hive model (Visual Moderation, CSAM Detection, etc.) lives inside a “Project” that your Hive rep will create for you.

- The CSAM Detection Project comes with its own API key—use it in the Authorization header whenever you call our endpoints.

- If you use other Hive models, keep their keys separate, even though the API URL is the same.

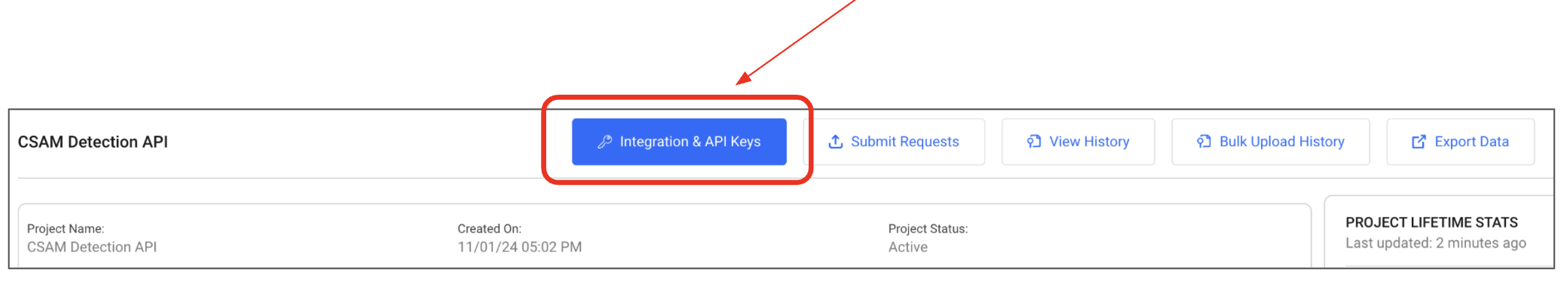

- Tip: In the Hive dashboard, open Projects → find your CSAM Detection Project → click Integration & API Keys to copy the correct key.

Updated 6 months ago